•1 min read•from InfoQ

Cloudflare Builds High-Performance Infrastructure for Running LLMs

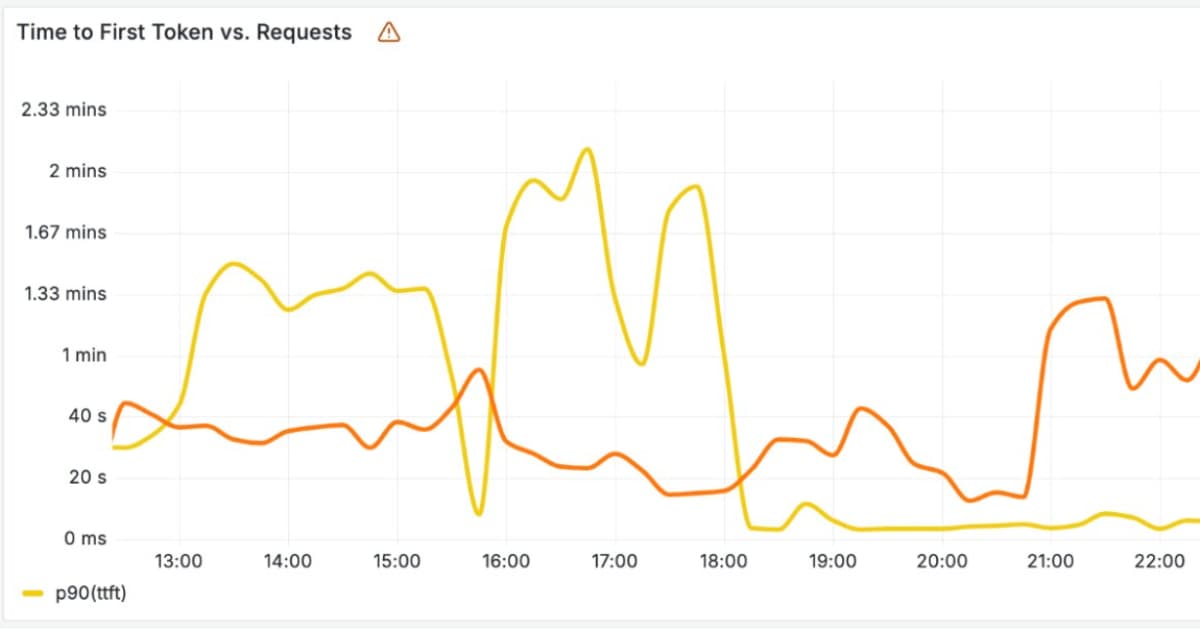

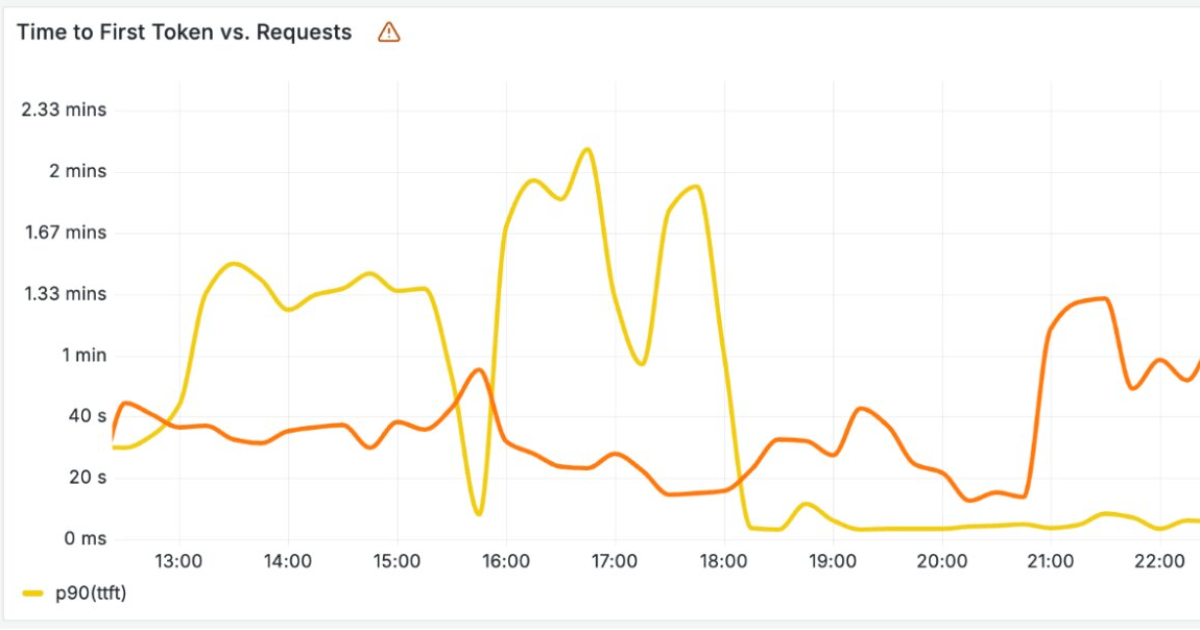

Cloudflare has recently announced new infrastructure designed to run large AI language models across its global network. As these models rely on costly hardware and must handle large volumes of incoming and outgoing text, Cloudflare separated the model's input processing and output generation onto different optimized systems.

By Renato LosioWant to read more?

Check out the full article on the original site

Tagged with

#large dataset processing

#natural language processing for spreadsheets

#natural language processing

#AI formula generation techniques

#generative AI for data analysis

#rows.com

#Excel alternatives for data analysis

#big data performance

#Cloudflare

#infrastructure

#AI language models

#large AI models

#global network

#input processing

#output generation

#optimized systems

#costly hardware

#text processing

#incoming text

#outgoing text